15 Key Takeaways from the Serverless Talk at AWS Startup Day

15 key takeaways from Chris Munns' "The Best Practices and Hard Lessons Learned of Serverless Applications" talk at AWS Startup Day Boston.

I love learning about the capabilities of AWS Lambda functions, and typically consume any article or piece of documentation I come across on the subject. When I heard that Chris Munns, Senior Developer Advocate for Serverless at AWS, was going to be speaking at AWS Startup Day in Boston, I was excited. I was able to attend his talk, The Best Practices and Hard Lessons Learned of Serverless Applications, and it was well worth it.

Chris said during his talk that all of the information he presented is on the AWS Serverless site. However, there is A LOT of information out there, so it was nice to have him consolidate it down for us into a 45 minute talk. There was some really insightful information shared and lots of great questions. I was aware of many of the topics discussed, but there were several clarifications and explanations (especially around the inner workings of Lambda) that were really helpful. 👍

Here are my 15 key takeaways from his talk:

1. Tweaking your function's computing power has major benefits

Setting your Lambda function's memory is the only "server" configuration option you have. Even so, this allows for quite a bit of control over the performance of your function. Chris gave an example that ran a Lambda function 1,000 times that calculated all prime numbers less than 1,000,000. Here were the results:

| Memory Allocation | Execution Time | Cost |

|---|---|---|

| 128 MB | 11.72296 sec | $0.024628 |

| 256 MB | 6.67894 sec | $0.028035 |

| 512 MB | 3.194954 sec | $0.026830 |

| 1024 MB | 1.46598 sec | $0.024638 |

As you can see, the least expensive option is to use 128 MB. But for just $0.00001 more, you can save 10.25698 seconds in execution time. He also mentioned a study done by NewRelic that noted a change in the 95th percentile of response rates from 3 seconds to 2.1 seconds when increasing memory by 50%. Certainly something worth looking at with your own functions.

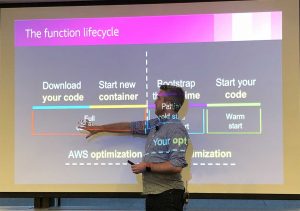

2. Only use a VPC Lambda if you need to

I wrote a post about this, but this is an interesting perspective that the Serverless team takes as opposed to basically everyone else at AWS. VPCs in Lambda are expensive in terms of start up times since they need to create ENIs. He had some great graphics that showed how Lambda functions were bootstrapped and explained how the internal connections are set up. Interesting stuff, but bottomline is: only use a VPC if you need to connect to VPC resources. Otherwise, the default VPC that Lambdas run in is plenty secure and much faster. On a positive note, Chris did say that they are working hard to reduce VPC Lambda bootstrapping.

3. VPC Lambdas are kept warm longer than non-VPC Lambdas

4. Functions with more than 1.8GB of memory are multi core

This was a really interesting point. CPU resources, I/O, and memory are all affected by your memory allocation setting. If your function is allocated more than 1.8GB of memory, then it will utilize a multi core CPU. If you have CPU intensive workloads, increasing your memory to more than 1.8GBs should give you significant gains. The same is true for I/O bound workloads like parallel calculations.

5. Lambda functions are completely isolated from the public Internet

I've heard this before, but the internal EC2 instances that Lambda containers run on are completely isolated from the public Internet. AWS invokes these functions via a control plane, which means that there is no way to connect to their VPCs behind the scenes. This is great from a security standpoint since you don't have to worry about port scanning or other network vulnerabilities that would inadvertently invoke your functions and cost you money.

6. Give your functions their own VPC subnets

On the subject of VPC-based Lambdas, Chris suggested creating subnets specifically for your Lambda functions instead of sharing ones with other VPC resources. Subnets in a VPC can access other subnets in that VPC, so isolating your Lambdas and allocating them their own IP ranges to be used with ENIs makes a ton of sense.

7. The CloudWatch Events "ping" hack is kind of a best practice (for now)

Another hotly debated topic around the web is the infamous "CloudWatch Events 'ping' hack" and whether or not it is the best way to keep a Lambda function warm. According to Chris, it's really the only way to do it right now. While he acknowledged that it is a "hack", he did give some great tips on how to do it "correctly" to save you money. Key points were:

- Don't ping more often than every 5 minutes

- Invoke the function directly (i.e. don't use API Gateway to invoke it)

- Pass in a test payload that can be identified as such

- Create handler logic that replies accordingly without running the whole function

8. Use functions to TRANSFORM, not TRANSPORT

This was a minor point, but I think it's worth mentioning. Lambda functions should be used to transform data, e.g. reformat, enrich, etc., not to simply move data from point A to point B. For example, don't use Lambda functions to download data from an S3 bucket and then upload it to another one. There are internal tools like S3.copy that will perform those actions for you.

9. Use Step functions to perform orchestration

You pay for the time that your Lambda function is running. If your process requires a lot of orchestration (i.e. you'll be waiting on other processes to finish before continuing execution), use AWS Step functions instead. Step functions allow you to trigger functions in succession based on the result of each function in your pipeline. It is a powerful tool that helps add resiliency into your process as well.

10. Optimize for your language

I use the Serverless optimizer plugin on my functions, but I was always curious as to the overall benefit of taking that approach. According to Chris, optimizing for your language is a good thing. If you're using Node.js, using tools like browserify and minimizing your code are key optimizations. This reduces the number of unused dependencies and makes your code smaller. He also mentioned avoiding monolithic functions. The dependencies needed for all of the function's features need to be loaded into memory every time and can significantly impact your function's performance.

11. Don't create unnecessary communication layers

Another good tip was to minimize the number of communication layers between your functions. If the situation makes sense, invoke a Lambda function directly from another Lambda function using the AWS-SDK instead of routing it through an API Gateway. This might not always be the right choice for your application, but you should certainly consider it as your first option to minimize points of failure.

12. Filter uninteresting events

This is a great tip for Lambdas that are processing SNS events or reacting to S3 actions. Rather than writing logic within your Lambda functions to discard or route events, you can apply filtering that limits the events sent to the Lambda. This can reduce code complexity and costs by not triggering unnecessary invocations. SNS message filtering can limit which messages a topic sends to a function, and an S3 event prefix (or suffix) can split your processing between JPGs and PNGs, for example.

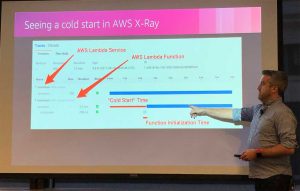

13. Turn on X-Ray

"To ensure efficient tracing and provide a representative sample of the requests that your application serves, the X-Ray SDK applies a sampling algorithm to determine which requests get traced. By default, the X-Ray SDK records the first request each second, and five percent of any additional requests."

14. Use metric filters for better observability

This is a great feature that is often underutilized. Chris stressed the importance of logging in your Lambda functions. Since CloudWatch is an asynchronous process, it has very little impact on the overall performance of your application. The point that Chris made was to use these logs to create metric filters in CloudWatch for better visibility into your app. For example, every time a new user is created, you would write that to the log. A metric filter could then tally those events and provide you with a CloudWatch metric that can be monitored and can trigger an alarm. Very cool.

15. Use Dead Letter Queues to save event invocation data

Serverless architectures rely heavily on asynchronous communication between functions and services. This often creates points of failure which can leave processes in an incomplete state when messages go undelivered. Luckily for us, Dead Letter Queues (DLQs) can be set up to catch failed communications. An asynchronously invoked Lambda function will be retried twice by default, but when it fails, the DLQ will catch the invocation data. We can even use SQS triggers to process these failed events.

Wrapping up

Lambda is getting better all the time, and I really appreciate how much AWS has done for serverless over the last few years. While there are still some challenges and limitations, talks like this really help developers like me understand best practices and learn the nuances of the platform. Thanks again to Chris Munns and the AWS team for putting on a great event. 🙌